Containerization is rapidly becoming a standard for managing applications in large production environments.

Containerization allows our development teams to move fast, deploy software efficiently, and operate at an unprecedented scale.

Book a demo today to see GlobalDots is action.

Optimize cloud costs, control spend, and automate for deeper insights and efficiency.

However, managing containers can be a time-consuming task. That’s where container orchestration technologies, like Kubernetes, come in.

Kubernetes allows organizations to automatically manage their services and deployment. In this article we’ll discuss key reasons why you should adopt Kubernetes.

Containerization and Kubernetes

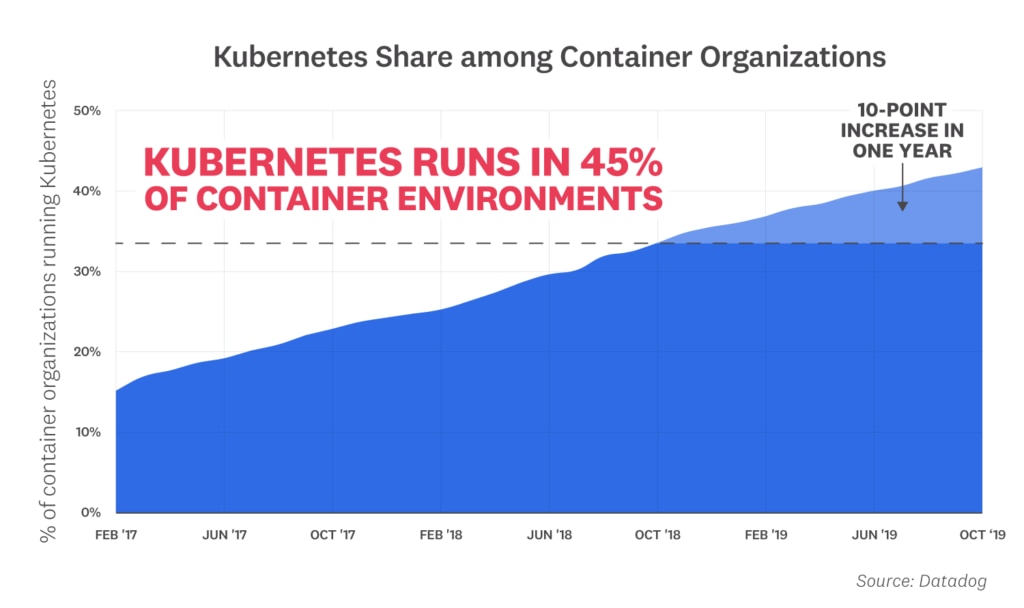

Containers are changing how companies build and run their applications and infrastructure. As containers become more commonplace, they have ushered in more dynamic infrastructure and given rise to a vast array of supporting technologies. Datadog analyzed more than 1.5 billion containers run by thousands of Datadog customers to reveal which technologies, programming languages, and orchestration practices have gained real-world traction in container environments.

Kubernetes (K8s in short) is an open-source container orchestration platform introduced by Google in 2014. It is a successor of Borg, Google’s in-house orchestration system that accumulated over a decade of the tech giant’s experience of running large enterprise workloads in production. In 2014, Google decided to further container ecosystem by sharing Kubernetes with the cloud native community. Kubernetes became the first graduated project of the newly created Cloud Native Community Foundation (CNCF), an organization conceived by Google and the Linux Foundation as the main driver of the emerging cloud native movement.

The platform’s main purpose is to automate deployment and management (e.g., update, scaling, security, networking) of containerized application in large distributed computer clusters. To this end, the platform offers a number of API primitives, deployment options, networking, container and storage interfaces, built-in security, and other useful features.

{% module “module_15761623471821293″ path=”/GlobalDots_June2019 Theme/Custom Modules/Blog_Download”, label=”Blog_Download”, link=”https://www.globaldots.com/bad-bot-report-2019″ %}

Kubernetes, at its basic level, is a system for running and coordinating containerized applications across a cluster of machines. It is a platform designed to completely manage the life cycle of containerized applications and services using methods that provide predictability, scalability, and high availability.

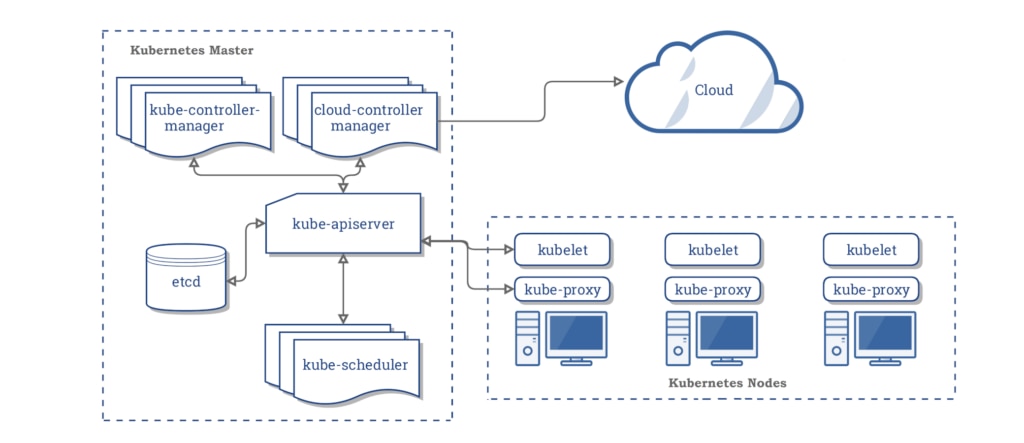

The central component of Kubernetes is the cluster. A cluster is made up of many virtual or physical machines that each serve a specialized function either as a master or as a node. Each node hosts groups of one or more containers (which contain your applications), and the master communicates with nodes about when to create or destroy containers. At the same time, it tells nodes how to re-route traffic based on new container alignments.

Some of its capabilities include:

- Managing clusters of containers

- Providing tools for deploying applications

- Scaling applications as and when needed

- Managing changes to the existing containerized applications

- Helping to optimize the use of underlying hardware beneath your container

- Enabling the application component to restart and move across the system as and when needed

Kubernetes provides much more beyond the basic framework, enabling users to choose the type of application frameworks, languages, monitoring and logging tools, and other tools of their choice. Although it is not Platform as a Service, it can be used as a basis for a complete PaaS.

In a few years, it has become a highly popular tool and one of the biggest success stories on the open-source platform.

Many cloud services offer a Kubernetes-based platform or infrastructure as a service (PaaS or IaaS) on which Kubernetes can be deployed as a platform-providing service. Many vendors also provide their own branded Kubernetes distributions.

Reasons to adopt Kubernetes

Kubernetes has some great features that allow you to deploy applications faster with scalability in mind:

- Horizontal infrastructure scaling: New servers can be added or removed easily.

- Auto-scaling: Automatically change the number of running containers, based on CPU utilization or other application-provided metrics.

- Manual scaling: Manually scale the number of running containers through a command or the interface.

- Replication controller: The replication controller makes sure your cluster has an equal amount of pods running. If there are too many pods, the replication controller terminates the extra pods. If there are too few, it starts more pods.

- Health checks and self-healing: Kubernetes can check the health of nodes and containers ensuring your application doesn’t run into any failures. Kubernetes also offers self-healing and auto-replacement so you don’t need to worry about if a container or pod fails.

- Traffic routing and load balancing: Traffic routing sends requests to the appropriate containers. Kubernetes also comes with built-in load balancers so you can balance resources in order to respond to outages or periods of high traffic.

- Automated rollouts and rollbacks: Kubernetes handles rollouts for new versions or updates without downtime while monitoring the containers’ health. In case the rollout doesn’t go well, it automatically rolls back.

- Canary Deployments: Canary deployments enable you to test the new deployment in production in parallel with the previous version.

Kuberntetes is vendor-agnostic. Many public cloud providers not only serve managed Kubernetes services but also lots of cloud products built on top of those services for on-premises application container orchestration. Being vendor-agnostic enables operators to design, build, and manage multi-cloud and hybrid cloud platforms easily and safely without risk of vendor lock-in. Kubernetes also eliminates the ops team’s worries about a complex multi/hybrid cloud strategy.

Kubernetes cons

Kubernetes is great, but, as anything in the world, it’s not perfect. Transitioning to Kubernetes is associated with numerous challenges which must be addressed by companies seeking to adopt it. Here’s our list of cons and challenges.

- Steep learning curve

- Hard to install and configure manually

- Missing High Availability piece

- K8s talent may be expensive

Still, despite the cons, Kubernetes remains a great option for container orchestration.

Conclusion

Containerization allows our development teams to move fast, deploy software efficiently, and operate at an unprecedented scale. To organize container deployments, organizations use container orchestration system like Kubernetes.

Kubernetes’ main function is to automate deployment and management (e.g., update, scaling, security, networking) of containerized application in large distributed computer clusters.

It gives you complete control over container orchestration, enabling you to deploy, maintain and scale application containers across a cluster of hosts.

If you have any questions, contact us today to help you out with your performance and security needs.